Thanks to everyone who has enjoyed my take on mappy things via this blog. But times move on, and I've not blogged here for nearly 2 years other than my end-of-year summaries. I'm therefore mothballing this blog and setting off on a new venture at cartoblography. Join me if you wish.

Wednesday 28 July 2021

Tuesday 22 December 2020

Favourite maps from 2020

So here I am once more, in the playground of the broken maps. One more entry in a diary self-penned.

But wait, the last blog I wrote was my favourite maps of 2019 exactly a year ago. Have there been no #cartofail teaching moments this year? Have I just missed them all? There have been a few gems. But as I also noted last year they are getting fewer and farther between and I feel that generally the trend is still towards better maps in the wild. That and I just couldn't be bothered writing blogs as I was busy writing a book.

I was even thinking of not compiling this annual list but a quick poll on the Twitters got a good reaction. So here is my annual list of just some maps that piqued my interest this year either because they had some interesting aesthetic, were novel in some way, were just really well executed, or if I just liked it for no good reason other than I liked it. There's no order. There's plenty of COVID-19 cartography (because, 2020). You may well disagree entirely with my selections or question why certain maps are in or not but that's the fun thing about personal lists. You can always make your own.

Links to the original maps and sources are in each title and I'd encourage you to head to the originals rather than rely on my low-res grabs.

Here we go...

COVID-19 dashboard by Johns Hopkins University

I'm just going to get this one out of the way first. I do not like the map because of the projection and symbology choices BUT maps sometimes don't need to be the story. What impressed me about the JHU dashboard was the way it signaled a viral moment for maps on the internet. The simple action of building a picture of the incidence and prevalence of COVID-19 using numbers on a web page provided a vital resource for engagement. There are better places to grab the data from too but as an example of viral mapping, this is never going to be beaten.

Stellar cartography! A map of the 550,000 scientific observations made to celebrate the Hubble Space Telescope's 30th anniversary. It's just a thing of beauty. What really appealed to me about the map was what lies behind the map. Nadieh wrote up such a detailed commentary on all of the decisions made in designing the map that illustrated her meticulous approach taken in making the map, and all the small design decisions that were necessary. The end piece, as with any great piece of cartography is the sum of all these well executed parts but it was fascinating to see all the iterations that never made the final map.

World map of night trains by Jug Cerovic

Well it had me at schematic map as Jug simply nails this genre defining example. It's a map of all the sleeper train routes in the world using the tried and trusted (but hard to apply) diagrammatic approach. I really like the 'blobbification' of the coastlines that allow him to give the schematic detail a semblance of reality while supporting the strict encoding of line angles at horizontal, vertical and 45 degrees. Colour is harmonious and the labels and icons sit well and add context to the map.

How the coronavirus spread in Hong Kong by Adolfo Arranz

This is simply stunning. It's visual data journalism at its peak. It's an infographic, but also a flow map with the width and colour of the lines encoding quantity and the difference between imported and localised cases of coronavirus. The central bar shows the broad split but the genius, the GENIUS is the use of white space towards the bottom left that outlines Hong Kong itself. It's such a clever touch that it's easy to miss it. That sounds like an oxymoron because you ordinarily would make what needs to be seen, seen. But by making it unseen, you become curious. You look at it and ask yourself is it Hong Kong? It sure looks like it. It IS! And that's the reward.

The United Fonts of America by Andy Murdock

A map of 222 place names in the United States labelled in the font of the same name. Because, why not? And I do enjoy this level of dedication to carto-nerdery. I even appreciate the write-up that accompanies the map and the observation that 222 isn't the answer. It's just that Andy had to stop at some point because the more he looked, the more he found.

Deaths involving COVID-19 by James Cheshire

In the early days of the coronavirus pandemic most of the maps that were being made were choropleths, and many were making the same mistake of mapping totals, not rates. It's understandable that people wanted to know totals but the #cartofails in early 2020 were often simply people with good intention hurridly trying to make a map, any map. I got involved with numerous media organisations to lead them toward a better way of showing the data which is something I'm personally proud of in my own work this year. The ability to have a small influence that improves the maps for millions of people is humbling. But as you'd expect some of those who know their craft were already doing things differently and better. Here, James took essentially the first month of UK data as the pandemic took hold and made a dot density map. Simple technique but expertly crafted. White on somber black gave a location (randomised within the administrative area) to the 20,283 deaths to date. As James explained he wanted to give each person their own dot rather than grouped with others. A very human approach to cartography. The clever use of a single colour to outline places with more than 50 deaths provides an additional visual metric.

The Highlands of Scotland by Alasdair Rae

Orientation of places on a map neither has to be 'north up' or fixed to a standard page size. I like this map for those simple reasons as Scotland is rotated to make best use of a landscape orientation, and then extends the page size so the shape fits the page while not leaving any particularly awkward empty space. What makes this particular view special is the decision to have the viewer look directly up the Great Glen that sits perfectly in the centre of the map. I also really enjoyed the richness of the non-standard colours and the subtlety of the label colouring. I would agree with Alasdair's own assessment that "if you're going to use circular place symbols then be careful not to make the one for Oban almost exactly the same size as the 'O' (OOBAN)." It's a commonly seen mistake but worth remembering to avoid people potentially confusing symbols with labels.

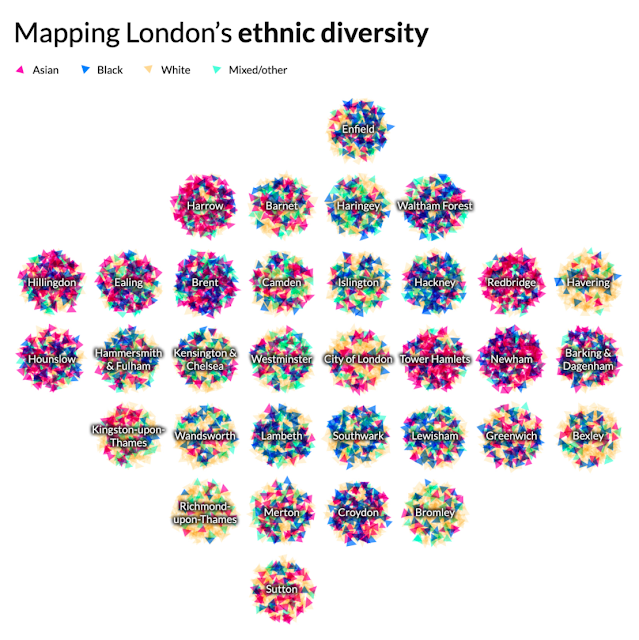

Mapping London's ethnic diversity by Niko Kommenda

It's a sort of Ben Day dots/Halftone/CMYK mashup on a gridded cartogram and I like the simplicity of the overall effect that shows the broad ethnic mix of each London Borough. Often simple ideas lead to some of the most impactful work, here with the mixing of individual colours leading to an overall sense of predominance in each borough. It's just nice to look at but you can dig into each borough a bit. Interesting choice to put the labels on top so boroughs with long names will obscure more compared to those with short names. What might have been interesting would be colour the label in the predominant colour of the mix of symbols below.

How web-connected is China by Chinapower

Another gridded cartogram of charts. This is an interactive (so go take a look at the original). Interactivity really does push the map to become a canvas for more complex information envisioning. The simplicity of the scaffold belies the amount of information and data points shown. The hover over event brings a specific chart into focus beautifully and instead of offering map or cartogram they've integrated the real geography by positioning the map within the cartogram in the space where Mongolia would be. Great layout decision. As you move across the graphs, the real map reacts to bring focus to the geographical where. And finally, the way in which the colours in the map are carried through to the charts also helps cement the link between real and abstract.

Geological map of Iran by Sean Conway

Draping historic maps over contemporary digital elevation models isn't new but Sean has made this aesthetic his own with the way he uses lighting to create a beautiful three-dimensional image on a flat canvas. I particularly like this example of his work because of the use of lighting to create three-dimensional legends that appear to nest the geological sub-categories. His store includes a great number of these types of maps and they're just pleasing to look at. Unless knowing something about the 1957 vintage of Iranian geology rocks your boat in which case it's doubly good.

Painting by numbers by Elana Schtulberg

Cartographers have often been demeaned as people only interested in colouring in. Yet that's exactly what's going on here and it's terrific. Mashing up some real geographical boundaries and their distinctive shapes with a photograph and extracting colour values with which to 'paint' each area is the simple technique. It renders an interesting outcome evocative of those old school paint-by-numbers painting kits (are they still available?). Lots of different ways to make these depending on your tech of choice and Elana's blog explains her approach. It's a pleasing artistic rendering using familiar map shapes.

How Decades of Racist Housing Policy Left Neighborhoods Sweltering by Brad Plumer and Nadja Popovich

The New York Times have become masters at the art of scrollytelling and this particular story uses maps as a central actor in the story. You have to go to the story to experience how well they delineate the commentary, and explain the historic impact of redlining on contemporary neighbourhoods. The story includes several maps that are cohesive in their overall design but which break down elements of the spatial narrative in different ways and to support different aspects of the story. It's an example of where a collection of maps each does it's own job rather than trying to cram everything on one map and expecting the reader to disentangle the story.

All of us united by the Biden-Harris campaign

Map outlines have often been used as a framework for artistic endeavor. I liked this illustration. The use of colour was emotive and filling the map with diversity leaves a very clear and indelible message.

Manhattan by Alex Bailey

Everything about this map is wonderful. Made by 14 year old Alex in two weeks, it's a 6ft 1:12,000 scale model. The detail, and attention to detail is next level. Lego + maps = AWESOME.

Panoramic map of the Alps by Eric Knight

Clearly this screen grab thumbnail doesn't do justice to this beautiful map (or any of the other examples on Eric's site) but the art of the panorama is not dead. Channeling the likes of Heinrich Berann this is a stunning painted landscape albeit digitally created rather than with pen and paint. Eric still manages to build a very human touch into the work and the labels in particular sit effortlessly in the landscape. There's attention to detail throughout and I particularly like the slight curve of the horizon that immediately tells you you're looking at quite an expanse.

Covid-19: The global crisis - in data by the Financial Times Visual and Data Journalism team.

Another tour de force from the FT team with this scrollytelling piece that uses words simply as the glue holding the wonderful array of maps, graphics, and interactives together. The colour palettes provide excellent contrast, not only leading the eye, but showing clearly what the reader is supposed to get from each map or graphic. There's been many plaudits for the line graphs and other charts John Burn-Murdoch (and others) created in 2020 to plot the spread of the virus and this article incorporates a copious spread of examples. It's also worth noting that the FT, through blogs and social media have been at great lengths to provide explainers to their work to aid understanding. They're not the only ones (FiveThirtyEight did the same with their presidential election web site) but it's a good trend. Expecting readers to comprehend even the most well constructed maps and graphs can always do with extra help in helping people to be able to read them better.

Grave Smoky Mountains National Park by Aaron Koelker

Establishing the right look for a map goes a long way to how people feel when they look at it. This map is ridiculously dark with a very bold muted colour palette but it works because of the subject matter. It's an exploration of cemeteries in the Great Smoky Mountain National Park. It uses an isometric projection to keep scale constant throughout. There's unique and well-designed symbology. There's interest in the detail provided by the written components. It has veils of cloud-cover (whisps of smoke?), a well integrated locator map and excellent use of white space. I could go on. My most favourite component is the neatline which isn't a line. It's composed of text that lists epitaphs from various headstones. Spectacularly creative. A work of cartographic art.

2020 Election map by Randall Munroe (xkcd comic)

Given my predilection for election mapping (did I mention I have a book on this very topic coming out in early 2021?) I could have included any number of brilliant news media efforts that kept us glued to the 2020 presidential election result. A special shout-out to FiveThirtyEight, The New York Times and The Washington Post for their exhaustive coverage. There were geographical maps and cartograms galore to feast upon. Yet Randall's map at once does something very simple. He positions symbols in roughly populated places and gives them a colour. It's a dasymetric dot density map of sorts and it does a great job of negating all that empty unpopulated space from the more commonly seen red/blue result by state map. As you'd expect, in a contest where 51.3% voted for Joe Biden, and 46.9% for the other guy, the map looks very even. It's a more human map because it puts every vote on the map rather than just shouting about who won each state through a large block of colour.

Carrot production in France by Romain Lacroix

A map with proportional, extruded carrots atop a knitted base. Struggles with occlusion and scale front to back as any 3D perspective map does but...carrots. Romain made this map for day 12 of Topi Tjukanov's #30daymapchallenge during November on Twitter. Day 12 was to make a map not using GIS software. There was no better map that day, or pretty much any day.

Commuting in corona times by Kera Till

There were plenty of coronavirus takes on the tube map this year. This one by artist Kera Till was one of the first in March. I generally wince at the various uses of the tube map as a way of representing something other than the tube itself but this gets a pass. It pretty much sums up life in 2020, and I for one can't wait to get back on the real tube some time soon.

Naismith International Park by Kirk Goldsberry

And squeaking in at the end of 2020 is this playful map of the 2019-2020 basketball season in the US. It's all for the love of the sport as elevation encodes scoring positions - higher ground is an area where more points were scored from. And Kirk then went to town building the map into a US National Park Service map with all the iconic symbology, colours, typography and layout you'd expect. It's not just an excellent homage to NPS maps, all of the features relate to stars of the game itself. Even as someone who has virtually zero understanding of the sport I really appreciate the dedication to cramming so much of the sport onto the map. A proper map mashup!

And that's just some of my favourites. I likely missed a load I had at one time intended to be included but not to worry, there's been more important things to focus on this year. And with that, I'll leave you with just one more map which wins the 2020 Gromit award for poor cartography. There were a few contenders this year such as the CNN live election map, or some really rather horrid coronavirus maps, all the usual rainbow coloured maps, non-normalised choropleths, splodgy heat maps and so on. But this just hurts the brain.

Property map by anon.

With best wishes for the holidays and a far better 2021. Hopefully the 2021 roundup won't feature so many coronavirus maps.

Ken

Monday 30 December 2019

Favourite maps from 2019

There's clearly still some major issues to address in the world of improving people's diet of maps. Persuading people that Web Mercator really isn't either the best choice, or even an uncontrollable default, still needs more work. So does the incessant use of rainbow colours on many, many maps. Just stop it. Please! If your work suffers from a #cartofail like this then you will not see it in this particular blog post that's firmly focused on the good stuff.

I always say that great work can do much to show people a better way so in that spirit, here's a bunch of maps that I saw in 2019 that I really liked. It's not a top ten. I saw many more I also really liked. There's likely many great maps I never even saw so it cannot be considered complete or comprehensive. They're in no particular order either but it's just a record of what I found good in this year's world of cartography. I'd also encourage you to go to the originals via the links. All I've included here are some screen grabs.

Mapping America's wicked weather and deadly disasters by Tim Meko (Washington Post)

Maps took centre stage in this scrollytelling article, and each was well crafted, vibrant and insightful. They're simple in scope but that doesn't mean simple maps. They're just pared back perfectly to fit web delivery. Great examples of contemporary web cartography. Six maps in total. All working together as a coherent set. Here's one:

Shinkansen Map by Jug Cerovic

I have a slight fetish for transit maps (as I'm sure most know) but I'm always interested in new takes. Jug's work in redesigning many of the world's major transit systems is phenomenal and worth checking out in its own right. Here, he takes a wonderfully artistic approach to Japan's bullet train network around the rising sun illuminating the north-west of the layout. Genius!

My friend and colleague Ben wasn't the first to make maps out of emoji but his efforts at making them from 240 characters in Twitter was fun and spawned a whole raft of efforts from many other people. I particularly liked his playful riff on the ongoing discussion of the Shetland Isles and inset maps which came to the fore in 2018 and still manages to be a source of cartographic humour.

Resilience in the Iroise Sea by Le Monde

This is one of 40 maps in the 'The time of the island" exhibition that took place in Marseille, France, this year, on the Geopolitics of Islands. It's just exquisite. Almost an historic, retro aesthetic but the layout, the linework, the colours, the typography - all perfectly harmonious. I particularly like how the north arrow and scale bar are incorporated into the map's frame.

Income distribution in Switzerland by Angelo Zehr in SRF News

Bivariate choropleth maps are hard. You have to limit the number of classes to an absolute minimum, maintain a sensible classification, and still allow the reader to see the relationship between two variables. This map does that job perfectly (also on the interactive version). But what I really like about this map is there's no spurious shading where there are mountains and no people. All too often we overlay arbitrary enumeration areas across geographies that exhaust space but fail miserably to show the differences between where people are and where they are not. I love that this map made the effort, and also still managed to add interest with a hillshade. It's Switzerland after all.

Trump Meltdown by Michael de Adder

Cartoons and maps have always gone well together and in the expert hands of political cartoonists they really take centre stage. I love this cartoon that shows Trump clinging on for dear hope to Florida as he drags the USA to even murkier depths. The bemused fish perhaps represents what everyone else is thinking as they sit back and watch the current political situation unfold.

Heating of the Arctic by Greg Fiske

November 2019 saw a fantastic twitter #30DayMapChallenge which had contributions from many and is well worth a trawl. The concept was simple, for the theme of the day, create a map of some data. The time limit was almost self-imposed so the maps were often quick and dirty, but that didn't mean they lacked beauty and great design. Maps by Jo Wood and Craig Taylor, in particular caught the eye. But the single most interesting map I saw was this. It's a view of the data I'd not seen before and that always makes you stop and pause. This may have been made quickly but it's clean, arresting and informative.

The Man Behind the Maps by James Niehues

Not a map, but a collection of maps representing the career of legendary ski resort artist James Niehues. If you've ever been skiing or snowboarding then you've likely used a trail/piste map illustrated by James as he embodies the latest of a small line of talented panoramists (Heinrich Berran, Hal Shelton, Bill Brown). This book came about through a kickstarter project that went viral. Now, instead of tattered, soggy maps you have to throw away after a few days on the slopes, they are beautifully collected in this magnificent book with all sorts of background notes. A true feast for the eyes of anyone who loves mountain cartography.

Fimbulheimen in Dronning Maud Land, Antarctica by Anders Skoglund (Norwegian Polar Institute)

This map came away with the big awards at the 2019 International Cartographic Conference in Tokyo and rightly so. It won on so many levels. It was huge. At 1:250,000 but measuring over 2.5m long and a metre tall it was stunning to see such a large high resolution satellite map of this part of Antarctica. Was the size just for capturing attention? No. The size of the map juxtaposes the sparse, barren, yet stunning landscape punctuated with small crops of intense detail. The map is littered with skillfully placed and detailed typography. If you've got a wall big enough you can download it yourself from the link above because clearly this blog cannot do it justice.

I give my good friend John Nelson a lot of ribbing for his firefly symbol creation. Truth is I love it, but as with any symbol scheme it often gets mis-used (i.e. applied to any dataset for the hell of it regardless of whether it's actually suitable or not). So to see the approach used on a map of bioluminescence was perfect. But this map goes well beyond just a good match of symbol to subject. The overall atmosphere is engaging. The layout is full of detail yet not overcrowded. There's description to aid the reader's interpretation and best of all, the tilt of Australia to make the map work within the page size and shape, and to provide space for the other marginalia is the best trick.

An Atlas of Space by Eleanor Lutz

There was a time back in 2016 when Eleanor gave us simply a beautiful portion of Mars but this year she released a collection of ten maps of planets, moons and outer space. It's a wonderful collection of different design approaches and new expressions of cartographic creativity. Colourful landscapes of natural and human impacted phenomena which are all made programetrically too. And this work is her part-time gig. She's studying for a Biology PhD in disease vectors of mosquitos.

Wasabi Pea map by Anton Thomas

OK, so, drink was involved. At the NACIS 2019 conference what better way to divest of a bowl of inedible wasabi pea bar snacks than make a map out of them? Trouble is, in a bar full of cartographers there's only really one person who can carry out the task of making a world map without downloading some map data and churning it through some software. Anton Thomas got to work, captivating the bar and, from memory made this perfect little world map. The best bit - not Mercator (though to be fair it's hard to determine its exact properties). And then it was gone...

The end of the world by Eloise Field (age 10½)

Children's maps are a whole magnificent cartographic sandpit in their own right. The Petchenik Children's map competition at the 2019 International Cartographic Conference in Tokyo showcased some incredible work (plus the usual eye-brow lifting entries that might have had some, ahem, adult help). You can see the winning entries here. But favourite children's map for me this year wasn't part of the exhibition at all. It was drawn by my niece, Eloise as part of her school work. The task was to create a drawing that represented 'the end of the world'. The cartographic force is strong in this youngling.

Sharpiegate map by Kenneth Field, Anthony Robinson et al.

And finally, I don't normally put my own stuff in these lists (that's for others to judge according to their own tastes) but the world is in a weird place and with the apparent Presidential modification of an official NOAA map of Hurricane Dorian I felt this worthy of inclusion. Anthony Robinson and I concocted a bit of fun in the wake of what became known as #sharpiegate. We made some sharpie "official cartography pens" and I took a blank map to the 2019 NACIS meeting in Tacoma WA. Simple idea - leave the map in public for a few days and see what happens. It's cartographic performance art. Most maps show a point or period in time. This map is of its time. It's a collection of dozens, if not hundreds, of people's comments, drawings, additions, modifications and so on. There's some very famous cartographic hands across this work. It was fun.

Agree? Disagree? I liked them all for many different reasons.

Happy Mappy New Year to you all.

Tuesday 1 October 2019

Trump's maps of dominance

The subject has been written about extensively. Perhaps the classic text remains Professor Mark Monmonier's "How to Lie with Maps" which explains how geography and the themes we map can be modified and manipulated in various ways, sometimes innocently, sometimes for persuasion or propaganda. Professor Jerry Brotton's film on the subject is also well worth a watch.

Donald J. Trump, 45th President of the United States, has become a master of the use of the map to assert his agenda. In his early days in office he presented a map of the results of the 2016 Presidential election to Reuters journalists and exclaimed "Here, you can take that, that's the final map of the numbers. It's pretty good, right? The red is obviously us."

Despite the map garnering considerable discontent, he wasn't wrong. If I had just been elected Republican President I would likely have used exactly the same map to illustrate the vast swathes of the country that I'd just won; that I'd turned red, perhaps against the odds.

The same map was seen enlarged, framed and put up on the wall of The White House soon after. Of course you would. You'd want to wander round your home and office and bathe in the glory of your victory, and to pause with visitors to note how red the country was. And reporter Trey Yingst caught the moment.

There are many other ways to map the results of that (and any other) election. I've made a gallery of them and the point here is simply to note that what Trump did wasn't wrong, but, rather, he selected a representation of his victory that used geography, data and cartographic techniques to create a map that suited him; that asserted his power, position and dominance.

Remember, the margin of victory in the 2016 Presidential election was slim (and Clinton won the popular vote) but imagine if the result had tipped slightly in Clinton's favour? Would this be the map she'd hang on the wall? Almost certainly not. Clinton would have used the same data which would have shown her with a marginal victory, but the map would have looked very different. More blue. And she wouldn't have been wrong either.

Towards the end of August 2019, Trump was also embroiled in another map mystery coined #sharpiegate. An official NOAA map of the possible path of Hurricane Dorian had been manually modified by the addition of a line, drawn by a felt-tip Sharpie pen, so the cone of uncertainty extended beyond the official extent so it incorporated part of Alabama.

It's suggested that this was to support a previous statement by Trump that "Alabama looks like it's going to get a big piece of [the hurricane]". The resulting furor centred on the modification of an official document, the falsifying of statements relating to the forecast, the sharing of misinformation and the way in which someone (allegedly Trump himself) had changed the map to suit a different narrative. This is a more serious case of map misuse. It undermined the official body responsible for producing the maps. It had the potential to be scaremongering and dangerous to people and property who were not in any way going to be affected. Trump denied all knowledge of how the extra mark was put on the map but it's a small leap between hand-drawn modification and more sinister manipulation of official maps that are received as trusted mechanisms of the truth.

Fast forward to late September 2019 and Lara Trump (daughter-in-law to Donald) tweeted another map as a response to the announcement that Trump was to face impeachment proceedings. Trump himself retweeted it on 1st October.

Once again, a map, bathed in red is used as a way to reinforce the message that Trump's support covers literally every part of the country. Once again, the map itself is not wrong (well, it is, a bit, but we'll come to that). It is exactly the map you'd use to frame your argument that you represent the country when you're threatened and are in reactionary mode.— Donald J. Trump (@realDonaldTrump) October 1, 2019

But this binary red/blue county level map obfuscates perhaps a more truthful version. Yes, the counties that are red were won by Trump but the map pays no attention to population distribution, population density or, indeed, the electoral college voting system. It's an artifact of the cartographic technique, the many decisions over time that have determined where boundaries are drawn, and not where people voted and in what amounts. The addition of the statement "Try to impeach this" is a challenge as well as a threat, and its juxtaposition with the map frames the argument as "us vs them". The use of the map to create a strong connection between Trump and what he perceives as his all-encompassing support in the face of what he sees as Democrat and media fuelled fake news is made. The map is published and consumed. It breeds division as some will find it speaks to their truth, and others will be appalled since it pays no attention to their truth.

As an aside there was some debate about whether the map was, in fact, the 2016 result. Some thought it bore more of a resemblance to Bush's 2004 victory. Put simply, some counties are red that were not red in 2016. The map appears to have been modified to appear more red than it really was. On closer inspection it seems that some counties that were won by Clinton, but with less than a 50% majority (because of third candidates in the main) have been shown in red.

There's some irony in the map too. San Bernardino county is the largest in the US. It's down in the bottom left corner in California. It's blue. Yes, at a county level Clinton won it but 95% of this county is unpopulated. So there's actually more blue on the map as a function of this county's size and shape than needed to have been on the map if another cartographic technique had been used.

So Trump is a serial map-abuser. These three examples clearly show how he uses the map for dominance and to assert his apparent power and possession. This is Trump's America. He's simply the latest in a very long line of leaders, politicians, dictators and many others to use maps to try and illustrate a version of the truth that has been cartographically mediated to suit a partisan purpose. Like I said, it's not wrong to use maps to tell a certain story (apart from when the facts are clearly manipulated which is stretching truth to the realms of plain lies) but it is a case of "reader, beware".

Those that consume the maps he promotes (us!) have widely varying abilities in seeing beyond the cartographic tricks he's using. Many have little idea or motive to question what they are seeing. This, to me, is the more fundamental problem. The general population have no reason to question the maps that are presented to them. But they should. They absolutely should. I spend much of my professional life exploring how maps can be made to tell different stories and how we can imbue them with shades of the truth in different ways, for good or bad. My second book is going to be on this very topic.

In the meantime, I'd encourage you to challenge yourself to read maps and charts with a more critical eye. And to do this, go read Monmonier's "How to Lie with Maps" and the recently published book by Alberto Cairo called "How Charts Lie". You will become a much smarter consumer of graphical information by learning a little of how you are being manipulated by the maps put in front of you.